ChatGPT ignored your best page

❌ Everything your team thinks drives AI visibility is wrong.

Hello Readers 🥰

Your content team spent three weeks on that pillar page. Comprehensive, well-structured, internally linked, the whole thing.

ChatGPT has never once cited it. And every week it sits at position 8 is a week your competitors at position 1 collect citations you'll never get back.

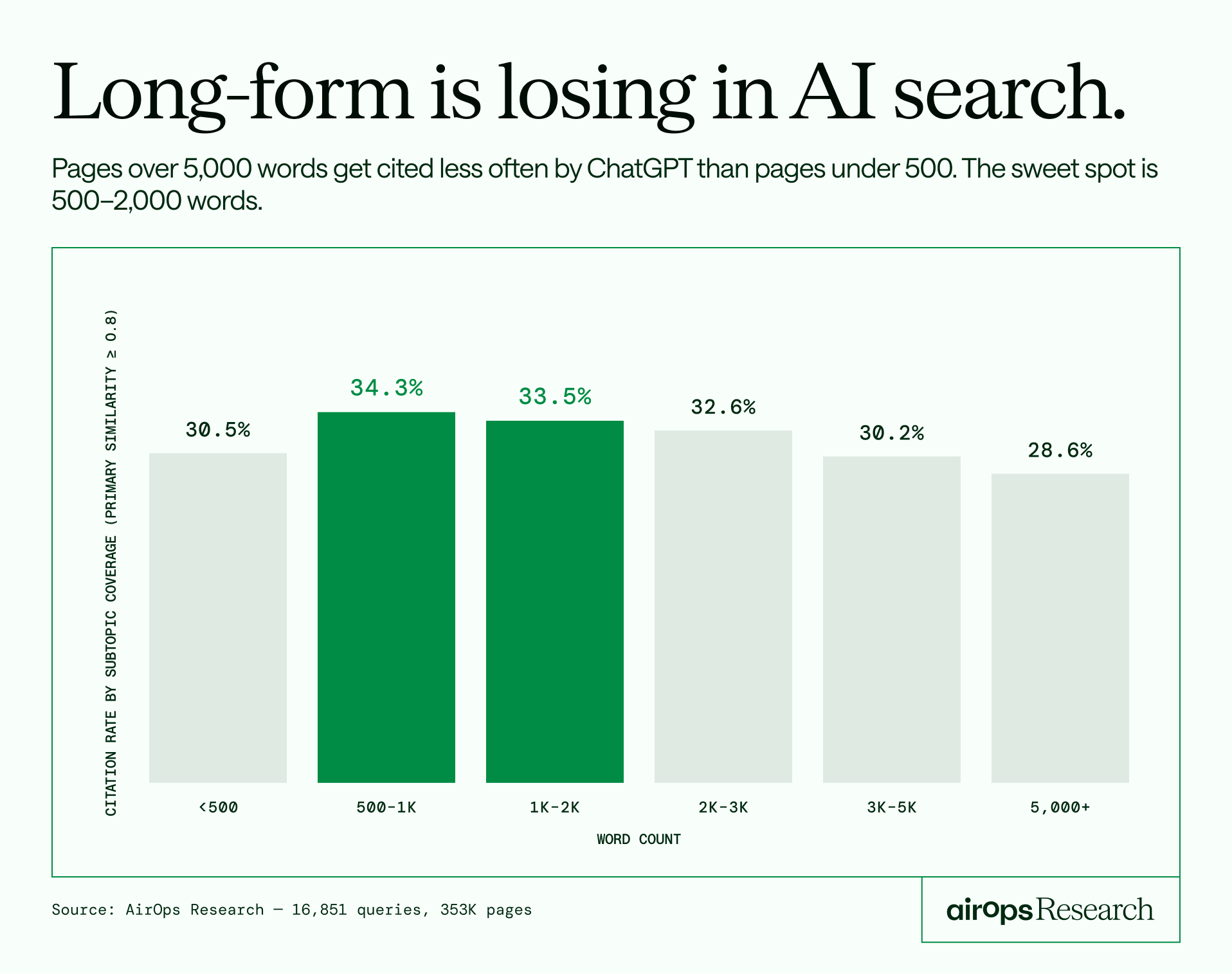

Here's what 353,799 pages' worth of data says is actually happening.

AirOps analyzed 16,851 ChatGPT queries and 353,799 pages across 10 industries to map exactly what happens between a user's question and the source that gets cited.

Here's what the data found:

⚡ Your headings decide if AI cites you before anything else does - Pages whose headings directly match the user's query get cited 41% of the time. Loosely matched headings get 29%. Everything else is secondary.

🔍 One invisible tag is worth more than your entire backlink strategy - Pages with JSON-LD schema markup get cited 38.5% of the time vs 32% without. A 6.5 point advantage that holds regardless of word count, headings, or domain authority.

📄 Your longest pages are your least cited ones - Pages over 5,000 words get cited less than pages under 500. The content your team is most proud of is probably the least visible to AI.

And the signal your whole strategy might be built around?

Domain authority shows zero positive correlation with AI citation. Always-cited pages have lower DA than never-cited ones. ChatGPT evaluates the page directly, not who links to it.

This is exactly what AirOps built the Fan-Out Effect Report to show you. Not theory or best practice guesses. Controlled comparisons across 20 signals pulled from 353,799 real pages, so you know precisely what to fix, what to stop building, and what to prioritize this quarter.

Read it today. By Monday, you'll know exactly which pages are bleeding AI visibility, which ones to refresh first, and which content strategy calls to stop making altogether.